Commit Control allows to keep the commit levels for each provider at a pre-configured level. It includes bandwidth control algorithms for each provider as well as the active traffic rerouting, in case bandwidth for a specific provider exceeds the configured limit. Commit Control also includes passive load adjustments inside each provider group.

A parameter called “precedence” (see peer.X.precedence) is used to set the traffic unloading priorities, depending on the configured bandwidth cost and providers throughput. The platform will reroute excessive bandwidth to providers, whose current load is less than their 95th percentile. If all providers are overloaded, traffic is rerouted to the provider with the smallest precedence – usually this provider has either the highest available bandwidth throughput, or the lowest cost. The higher is the precedence, the lower is the probability for the traffic to be sent to a provider, when its pre-configured 95th percentile usage is higher.

See also: core.commit_control.

1.3.3.1 Flexible aggressiveness of Commit algorithm based on past overloads #

Metering bandwidth usage by the 95th presents the following alternative interpretation – the customer is allowed to exceed his limits 5% of times. As such, IRP assumes there’s a schedule of overloads based on the current time within a month and an actual number of overloads already made.

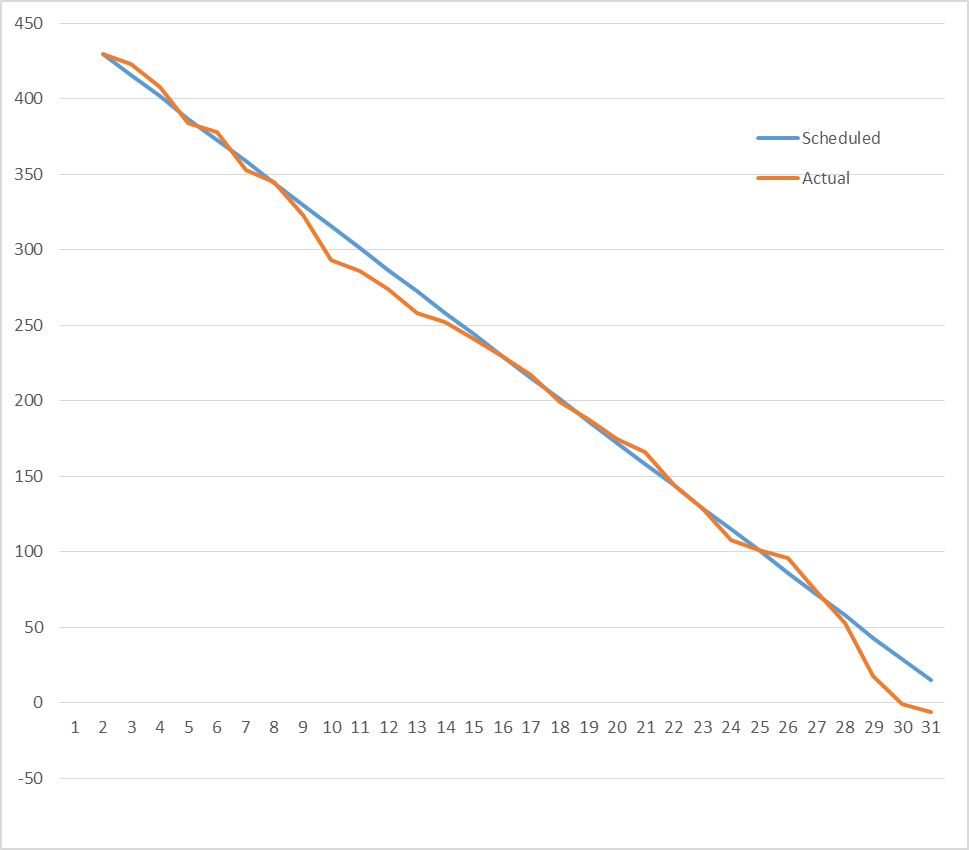

Figure 1.3.3: More aggressive when actual exceeds schedule

The sample image above highlights the remaining scheduled and the actual amount of allowed overloads for the month decreasing. Whenever IRP depicts that the actual line goes below schedule (meaning the number of actual overloads exceeds what is planned) it increases its aggressiveness by starting unloading traffic earlier than usual. For example, if the commit level is set at 1Gbps and IRP will start unloading traffic at possibly 90% or 80% depending on past overloads count.

The least aggressive level is set at 99% and the most aggressive level is constrained by configuration parameter core.commit_control.rate.low + 1%.

This is a permanent feature of IRP Commit Control algorithm.

1.3.3.2 Trigger commit improvements by collector #

Commit control improvements are made after the flows carrying traffic are probed and IRP has fresh and relevant probe results. Subsequent decisions are made based on these results and this makes them more relevant. Still, probing takes some time and in case of fluctuating traffic patterns the improvements made will have reduced impact due to flows ending soon and being replaced by other flows that have not been probed or optimized.

For networks with very short flows (average flow duration under 5 minutes) probing represents a significant delay. In order to reduce the time to react to possible overload events IRP added the feature to trigger commit control improvements on collector events. When Flow Collector detects possible overload events for some providers, IRP will use data about past probed destinations in order to start unloading overloaded providers early. This data is incomplete and a bit outdated but still gives IRP the opportunity to reduce the wait time and prevent possible overloads. Later on, when probes are finished another round of improvements will be made if needed.

Due to the fact that the first round of improvements is based on older data, some of the improvements might become irrelevant very soon. This means that routes fluctuate more than necessary while on average getting a reduced benefit. This is the reason this feature is disabled by default. Enabling this feature represents a tradeoff that should be taken into consideration when weighing the benefits of a faster react time of Commit Control algorithm.

This feature is configurable via parameter: core.commit_control.react_on_collector. After enabling/disabling this feature IRP Core service requires restart.

1.3.3.3 Commit Control improvements on disable and re-enable #

IRP up to version 2.2 preserved Commit Control improvements when the function was disabled globally or for a specific provider. The intent of this behavior was to reduce route fluctuation. In time these improvements are overwritten by new improvements.

We found out that the behavior described above ran contrary to customer’s expectations and needs. Usually, when this feature is disabled it is done in order to address a more urgent and important need. Past Commit Control improvements were getting in the way of addressing this need and was causing confusion. IRP versions starting with 2.2 aligns this behavior with customer expectations:

- when Commit Control is disabled for a provider (peer.X.cc_disable = 1), this Provider’s Commit Control improvements are deleted;

- when Commit Control is disabled globally (core.commit_control = 0), ALL Commit Control improvements are deleted.

1.3.3.4 Provider load balancing #

Provider load balancing is a Commit Control related algorithm, that allows a network operator to evenly balance the traffic over multiple providers, or multiple links with the same provider.

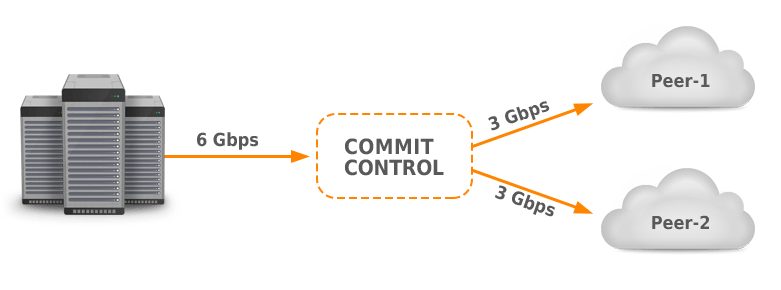

For example, a specific network having an average bandwidth usage of 6Gbps has two separate ISPs. The network operator wants (for performance and/or cost reasons) to evenly push 3Gbps over each provider. In this case, both upstreams are grouped together (see peer.X.precedence), and the IRP system passively routes traffic for an even traffic distribution. Provider load balancing is enabled by default via parameter peer.X.group_loadbalance.

Figure 1.3.4: Provider load balancing

1.3.3.5 Commit control of aggregated groups #

Customers can deploy network configurations with many actual links going to a single ISP. The additional links can serve various purposes such as to provision sufficient capacity in case of very large capacity requirements that cannot be fulfilled over a single link, to interconnect different points of presence on either customer (in a multiple routing domain configuration) or provider sides, or for redundancy purposes. Individually all these links are configured in IRP as separate providers. When the customer has an agreement with the ISP that imposes an overall limitation on bandwidth usage, these providers will be grouped together in IRP so that it can optimize the whole group.

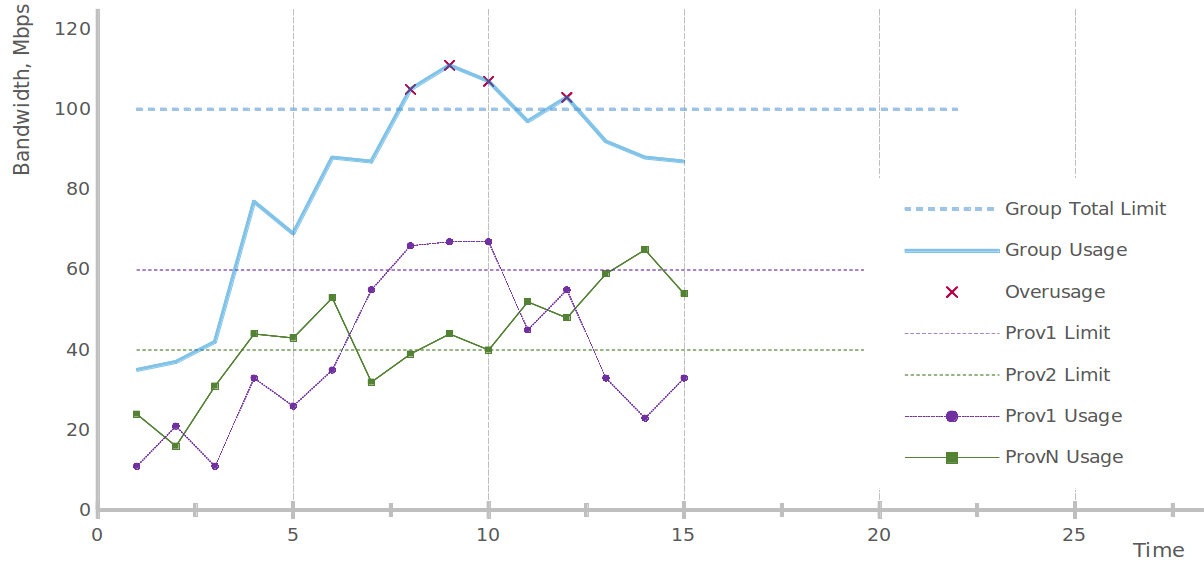

The rationale of this feature as illustrated in the figure below is that if in the group overusages on one provider are compensated by underusages on another provider there is no need to take any action since overall the commitments made by the customer to the ISP have not been violated. Commit control algorithm will take action only when the sum of bandwidth usage on all providers in the group exceed the sum of bandwidth limits for the same group of providers.

Figure 1.3.5: Commit control of aggregated groups

The image above highlights that many overusages on the green line are compensated by purple line underusages so that the group usage is below group total limits. Only when the traffic on the purple line increases significantly and there are no sufficient underusages on the other providers in the group to compensate the overusages, Commit Control identifies overusages (highlighted with a red x on the drawing above) and takes action by rerouting some traffic towards providers outside the group.

In order to configure providers that are optimized as an aggregated group 1) first the providers will be configured with the same precedence in order to form a group; 2) the overall 95th limitation will be distributed across providers in the group as appropriate; 3) and finally load balancing for the group will be Disabled by parameter peer.X.group_loadbalance.

1.3.3.6 95th calculation modes #

Commit control uses the 95th centile to determine whether bandwidth is below or above commitments. There are different ways to account for Outbound and Inbound traffic when determining the 95th value.

IRP supports the following 95th calculation modes:

IRP supports the following 95th calculation modes:

- Separate 95th for in/out: The 95th value for inbound and outbound traffic are independent and consequently bandwidth control for each is performed independently of each other. For this 95th calculation modes IRP monitors two different 95th for each inbound and outbound traffic levels.

- 95th from greater of in, out: At each time-point the greater of inbound or outbound bandwidth usage value is used to determine 95th.

- Greater of separate in/out 95th: 95th are determined separately for inbound and outbound traffic and the larger value is used to verify if commitments have been met.

Refer peer.X.95th.mode.