The triumvirate of network performance metrics are latency, loss and jitter. Today, we’re going to take a look at how each of them — especially latency and packet loss — determine application performance.

The triumvirate of network performance metrics are latency, loss and jitter. Today, we’re going to take a look at how each of them — especially latency and packet loss — determine application performance.Almost all applications use TCP, the Transmission Control Protocol, to get their data from A to B; 85% of the internet’s traffic is TCP. One interesting aspect of TCP is that it completely hides the packet-based nature of the network from applications. Whether an application hands a single character to TCP once in a while (like Telnet or SSH) or dumps a multi-megabyte file as fast as it can (FTP) or anything in between, TCP takes the data, puts it in packets, and sends it on its way over the network. The internet is a scary place for packets trying to find their way: it’s not uncommon for packets to be lost and never make it across, or to arrive in a different order than they were transmitted. TCP retransmits lost packets and puts data back in the original order if needed before it hands over the data to the receiver. This way, applications don’t have to worry about those eventualities.

Latency

Early networking protocols were often built for operation on LANs or campus networks, where packets can travel from one end of the network to the other in mere milliseconds. These protocols wouldn’t always work so well over the internet, where it can easily take a tenth of a second for a packet to circumnavigate the globe before it gets to its destination. If you then also want a return packet, this number doubles to 200 milliseconds. After all, the speed of light pulses in optical fiber is 200,000 km or 124,000 miles per second, so the round-trip-time (RTT) is one millisecond per 100 km / 62 mi.

Queuing theory (or a trip to the post office) tells us that the busier a link gets, the longer packets have to wait. For instance, a 10 Gbps link running at 8 Gbps (80% utilization) means that on average, when a packet arrives, there’s four others already waiting. At 99% utilization, this queue grows to 99 packets. Back when links were much slower, this could add a good amount of extra latency, but at 10 Gbps, transmitting 99 packets of an average 500 bytes just 0.248 milliseconds. So these days, buffering in routers in the core of the network isn’t going to add a meaningful amount of delay unless links are massively oversubscribed—think 99.9% utilization or higher.

TCP has a number of mechanisms to get good performance in the presence of high latencies. The main one is to make sure enough packets are kept “in flight”. Simply sending one packet and then waiting for the other side to say “got it, send then next one” doesn’t cut it; that would limit throughput to five packets per second on a path with a 200 ms RTT. So TCP tries to make sure it sends enough packets to fill up the link, but not so many that it oversaturates the link or path. This works well for long-lived data transfers, such as big downloads.

But it doesn’t work so well for smaller data transfers, because in order to make sure it doesn’t overwhelm the network, TCP uses a “slow start” mechanism. For a long download, the slow start portion is only a fraction of the total time, but for short transfers, by the time TCP gets up to speed, the transfer is already over. Because TCP has to wait for acknowledgments from the receiver, more latency means more time spent in slow start. Web browser performance used to be limited by slow start a lot, but browsers started to reuse TCP sessions that were already out of slow start to download additional images and other elements rather than keep opening new TCP sessions. However, applications written by software developers with less networking experience may use simple open-transfer-close-open-transfer-close sequences that work well on low latency networks but slow down a lot over larger distances. (Or on bandwidth-limited networks, which also introduce additional latency.)

Of course nearly every TCP connection is preceded by a DNS lookup. If the latency towards the DNS server is substantial, this slows down the entire process. So try to use a DNS server close by.

Packet loss

Ideally, a network would never lose a single packet. Of course, in the real world they do, and for two reasons. Every transmission medium will flip a bit once in a while, and then the whole packet is lost. (Wireless typically sends extra error correction bits, but those can only do so much.)

If such an error occurs, the lost packet needs to be retransmitted. This can hold up a transfer. Suppose we’re sending data at a rate of 1000 packets per second on a 200 ms RTT connection. This means when the sender sends packet 500, packets 401 – 499 are still in flight, and the receiver has just sent an acknowledgment for packet 400. However, acknowledgments 301 – 399 are in flight in the other direction, so the latest acknowledgment the sender has seen is 300. So if packet 500 is lost, the sender won’t notice until it sees acknowledgment 499 being followed by 501. By that time, it’s transmitting packet 700. So the receiver will see packets 499, 501 – 700, 500, and then 701 and onward. This means that the receiver must buffer packets 501 – 700 while it waits for 500—don’t forget, it’s the sacred duty of TCP to deliver all data in the correct order.

Usually the above is not a problem. But if network latency or packet loss get too high, TCP will run out of buffer space and the transfer has to stop until the retransmitted lost packet has been received. In other words: high latency or high loss isn’t great, but still workable, but high latency and high loss together can slow down TCP to a crawl.

However, it gets worse. The second reason packets get lost is because TCP is sending so fast that router/switch buffers fill up faster than packets can be transmitted. When a buffer is full and another packet comes in, the router or switch can only do one thing: “drop” the packet. Because TCP can’t tell the difference between a packet lost because of a flipped bit or because of overflowing buffers in the network, it’ll assume the latter and slow down. In our example above, this slowdown isn’t too severe, as subsequent packets keep being acknowledged. This allows TCP to use “fast retransmit”.

However, fast retransmit doesn’t work if one of the last three packets in a transfer gets lost. In that situation, TCP can’t tell the difference between a single lost packet or the situation where the network is massively overloaded and nothing gets through. So now TCP will let its timeout timer count down to zero, which often takes a second, and then tries to get everything going again—in slow start mode. This problem will often bite web sessions, which tend to be short, where a page takes seconds to completely finish loading, although most of it appeared quickly.

Another reason for TCP to end up in a timeout situation is if too many packets in a short time. At this point, TCP has determined that the network can only bear very conservative data transfer speeds, and slow start really does its name justice. Often, in this case it’s faster to stop a download and restart it rather than to wait for TCP to recover.

Jitter

Jitter is the difference between the latency from packet to packet. Obviously, the speed of light isn’t subject to change, and fibers tend to remain the same length. So latency is typically caused by buffering of packets in routers and switches terminating highly utilized links. (Especially on lower bandwidth links, such as broadband or 3G/4G links.) Sometimes a packet is lucky and gets through fast and sometimes the queue is longer than usual. For TCP, this isn’t a huge problem, although this means that TCP has to use a conservative value for its RTT estimate and timeouts will take longer. However, for (non-TCP) real-time audio and video traffic, jitter is very problematic, because the audio/video has to be played back at a steady rate. This means the application either has to buffer the “fast” packets and wait for the slow ones, which can add user-perceptible delay, or the slow packets have to be considered lost, causing dropouts.

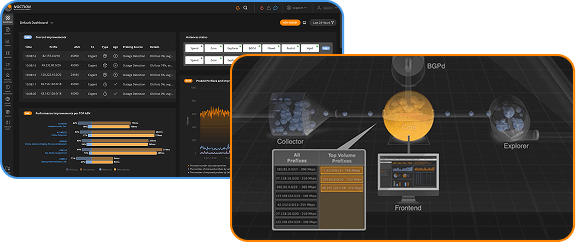

In conclusion, in networks that use multiple connections to the internet, it can really pay off to avoid paths that are much longer and thus incur a higher latency than alternative paths to the same destination, as well as congested paths with elevated packet loss. At Noction we made it to the top of automating the path selection process. Make sure you check our Intelligent Routing Platform and how it evaluates packet loss and latency across multiple providers to choose the best-performing route.

Read this article in French – Les effets de la latence et de la perte de paquets sur la performance réseau